Overview

Building AI agents can be challenging because documentation is scattered and the interaction between planning, memory, and tool execution is often unclear. This article presents a concise, progressive roadmap broken into 12 sessions that guide development from a minimal loop to a fully autonomous multi-agent system. The approach emphasizes incremental complexity, reproducible patterns, and practical deployment considerations.

The Core Agent Loop

Most agent architectures share a minimal kernel that repeats until the agent stops. In pseudocode form the pattern looks like: while True: response = client.messages.create(messages, tools); if response.stop_reason != “tool_use”: break; for tool_call in response.content: result = execute_tool(tool_call.name, tool_call.input); messages.append(result). This loop keeps orchestration separate from tool implementations and enables deterministic control flow while adding new capabilities through modular tools.

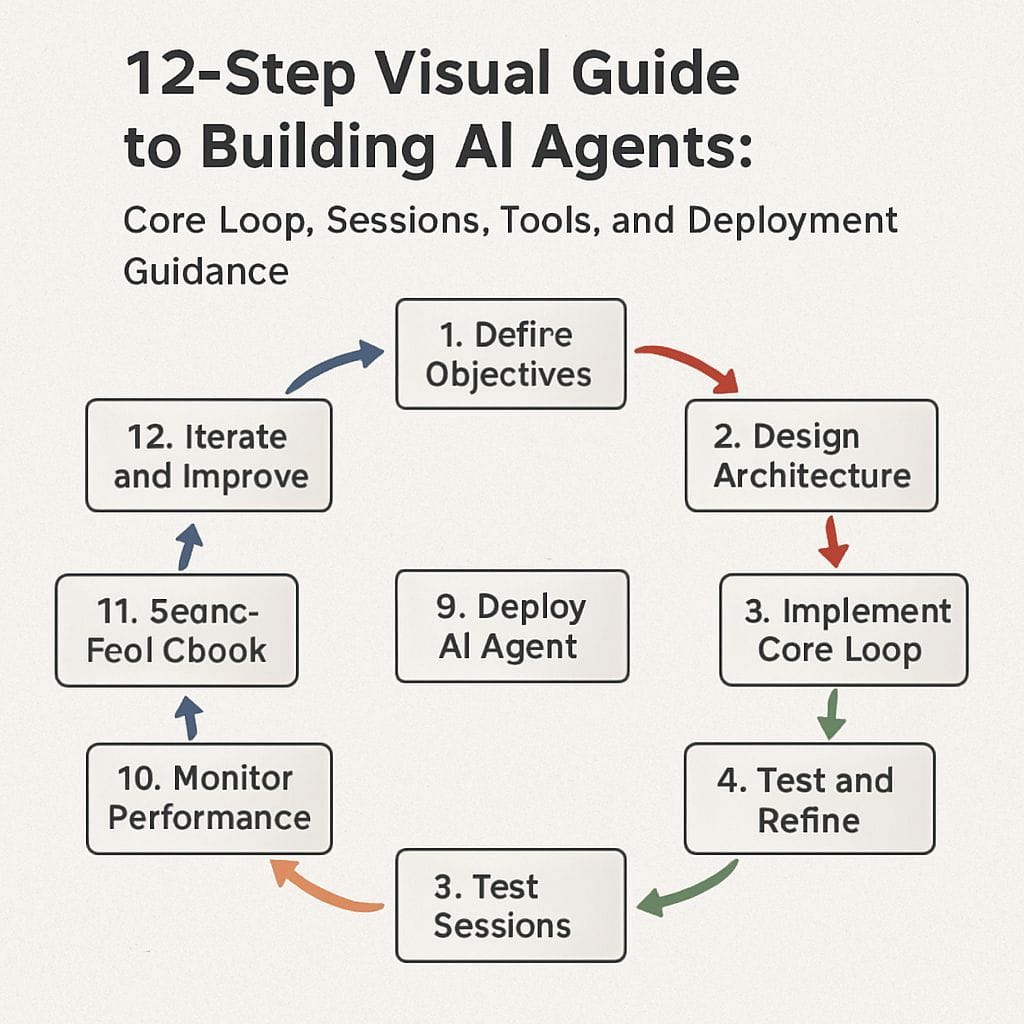

The 12 Sessions Roadmap

- 01 The Agent Loop: The minimal kernel consisting of a while-loop and a single tool to validate the orchestration pattern.

- 02 Tools: Register new tools into a dispatch map so the loop remains unchanged while capabilities expand.

- 03 TodoWrite: Add a planning phase so the agent lists steps before executing each action to prevent drift.

- 04 Subagents: Use isolated message contexts per subtask to keep the main conversation history clean and bounded.

- 05 Memory: Introduce short-term and long-term memory stores to persist facts and revisit prior decisions.

- 06 Tool Chaining: Support multi-step tool sequences where outputs feed subsequent tool inputs for complex workflows.

- 07 Parallelism: Run subagents or tasks concurrently and reconcile outputs into a consolidated plan or result.

- 08 Safety and Guardrails: Add constraints, validation checks, and human-in-the-loop breakpoints to reduce undesired behavior.

- 09 Observability: Implement logs, metrics, and traces for debugging and performance tuning.

- 10 Resource Management: Introduce budgeting for API calls, compute, and memory to control operational costs.

- 11 Specialization: Create domain-specific toolsets and knowledge bases for improved precision and speed.

- 12 Multi-Agent Orchestration: Combine agents with roles, communication channels, and arbitration rules for complex autonomous systems.

When to Build an Agent

Agents become appropriate when a system needs to make sequential decisions, handle branching workflows, or coordinate multiple tools and knowledge sources. Building an agent requires rethinking how decisions are made and how complexity is managed. Conventional automation may suffice for linear tasks, while an agentic approach adds value for tasks needing planning, memory, and adaptive tool use.

Key Architectural Components

- Models: Select appropriately sized language models based on latency, budget, and accuracy requirements.

- Tools: Provide deterministic or stateful functions the agent can call, including external APIs, databases, and search.

- Orchestration: Implement the core loop and dispatch logic that sequences reasoning, tool use, and plan updates.

- Memory: Design short-term context windows and long-term retrieval systems for persistent knowledge.

- Safety: Add guardrails, input validation, and human oversight where necessary.

Popular Frameworks and Tools

- LangChain and similar libraries provide building blocks for prompt management, chains, and tool integrations but may need custom orchestration for agent behavior.

- Cursor, Claude Code, Replit agents and other platforms offer higher-level integrations for generating and running code or deploying agents, which can accelerate prototyping.

- NotebookLM and Vellum exemplify alternative interfaces that treat agents as long-term thinking partners or prompt-driven workflows rather than purely code-first systems.

Deployment Considerations

Successful agentic deployments require attention to business factors including measurable objectives, reliability, cost control, and compliance. Six common factors to evaluate are clarity of task scope, data quality and access, observability, fail-safe behavior, human oversight, and ongoing maintenance. Pilot deployments help validate assumptions before scaling an agent to production.

Practical Next Steps

- Start Small: Implement the minimal loop and one tool to validate the orchestration pattern.

- Iterate Incrementally: Add planning, memory, and observability one session at a time following the 12-step roadmap.

- Measure and Harden: Use metrics to identify failure modes and add safety controls where needed.

- Choose Tools Wisely: Evaluate frameworks for fit against requirements such as latency, maintainability, and cost.

This structured approach makes agent development more predictable and easier to reason about. The progressive sessions provide a repeatable path from a simple loop to a robust multi-agent system while balancing practicality and safety.

Leave a Reply